1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

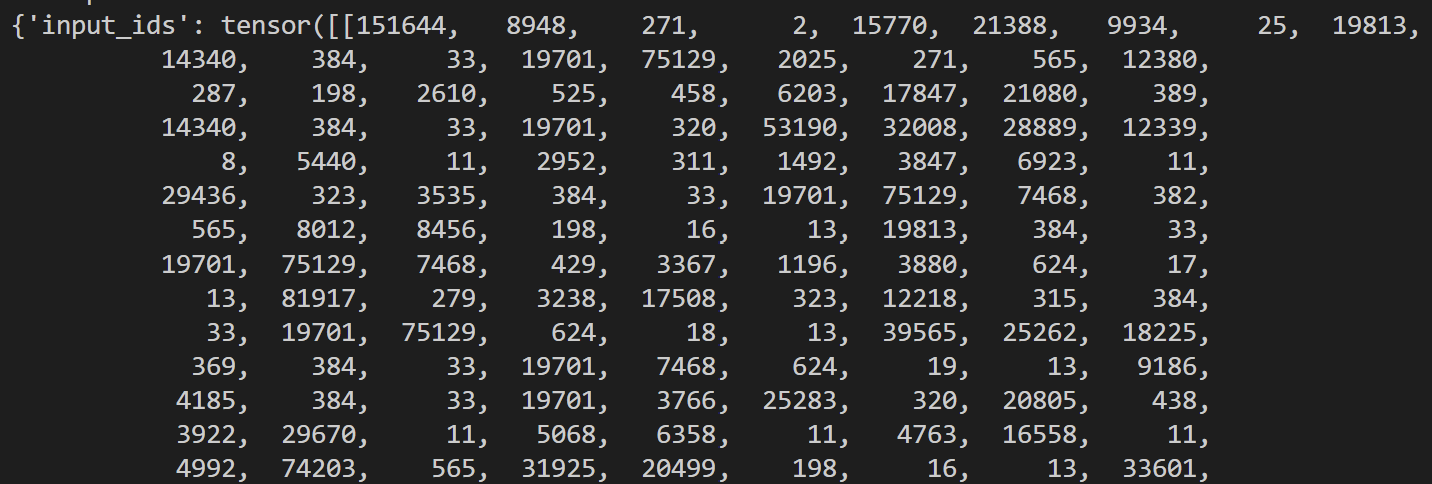

| from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

from peft import LoraConfig, get_peft_model, PeftModel

import torch

import random

import time

model = AutoModelForCausalLM.from_pretrained(

"./Qwen",

device_map="auto",

trust_remote_code=True,

)

peft_config = LoraConfig(

task_type="CAUSAL_LM", inference_mode=False, r=8, lora_alpha=32, lora_dropout=0.1, target_modules=["q_proj", "v_proj"]

)

model = PeftModel.from_pretrained(model, "lora_adapter10-30")

print("load peft")

tokenizer = AutoTokenizer.from_pretrained("./Qwen")

prompt = "your system prompt"

prompt_u = "your user prompt"

MAX_LENGTH=1500

def predict(messages, model, tokenizer):

device = "cuda"

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

enable_thinking=False

)

model_inputs = tokenizer([text], return_tensors="pt").to(device)

attention_mask = torch.ones(model_inputs.input_ids.shape,dtype=torch.long,device="cuda")

generated_ids = model.generate(

model_inputs.input_ids,

attention_mask=attention_mask,

max_new_tokens=MAX_LENGTH,

temperature=1.0,

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

return response

save_dir="./seeds/"

total=0

for i in range(10):

start_time=time.time()

messages = [

{"role": "system", "content": f"{prompt}"},

{"role": "user", "content": f"{prompt_u.format(num=random.randint(5, 40))}"}

]

response = predict(messages, model, tokenizer)

end_time=time.time()

total += end_time - start_time

print(f"Generation time: {end_time - start_time:.2f} seconds")

response_text = f"""

LLM:{response}

"""

print(response_text)

file=f"{save_dir}seed_{i}.txt"

with open(file, "w") as f:

f.write(response)

print(f"average generation time: {total/10:.2f} seconds")

|